Who needs broadcasting with guaranteed low latency? In fact, there are many ways to use it. For instance, in online video auctions. Imagine yourself being an auctioneer.

– “Two hundred thousand, one ”

– “Sold!”

With high latency you will end up saying “two hundred thousand, three” even before the video of your “one” arrives to participants. To let them bid in time, the latency must be low.

Simply put, low latency is vital for any game-like scenario, be that an online video auction, video broadcasting of horseraces or an online version of the “Jeopardy” game – all those applications require guaranteed low latency and transmission of video and audio in real time.

Why CDN

One server often is not capable of delivering streams to all viewers simply because spectators are geographically dispersed and have internet channels of varying bandwidth. Besides, one server may be simply not enough to process the load. Hence a bunch of servers is needed to deliver streams to recipients. And this is the definition of CDN – content delivery network. Content in this case is streaming video with low latency that is transmitted from the web camera of the broadcasting user to all viewers.

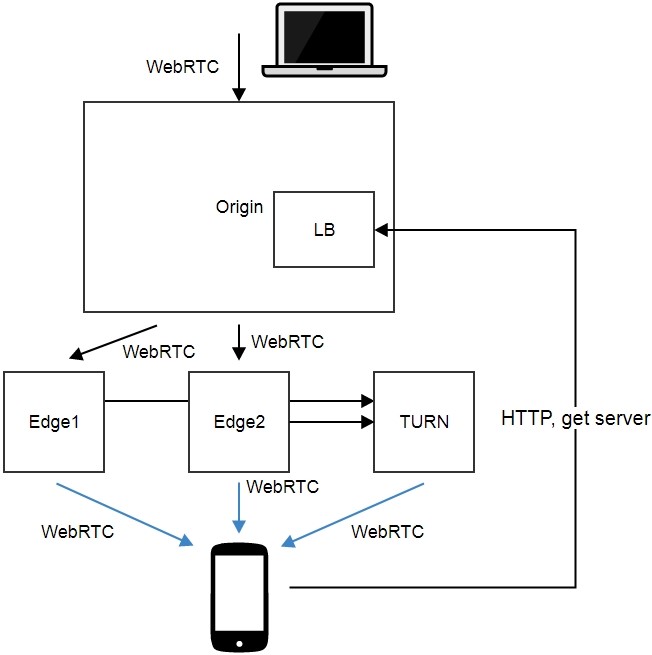

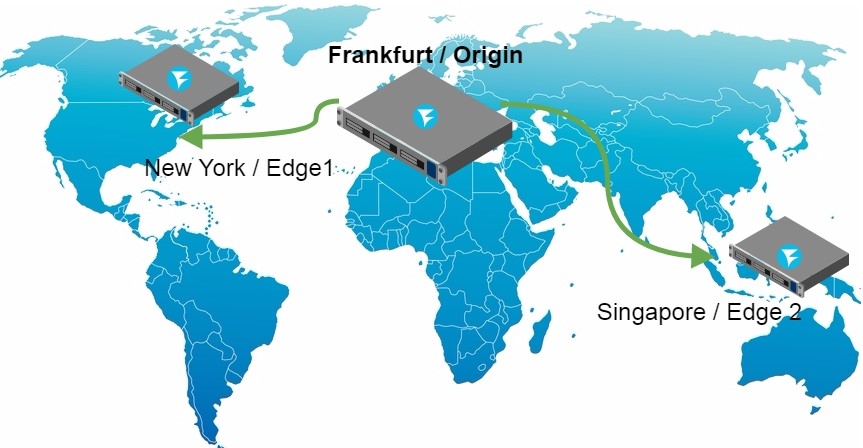

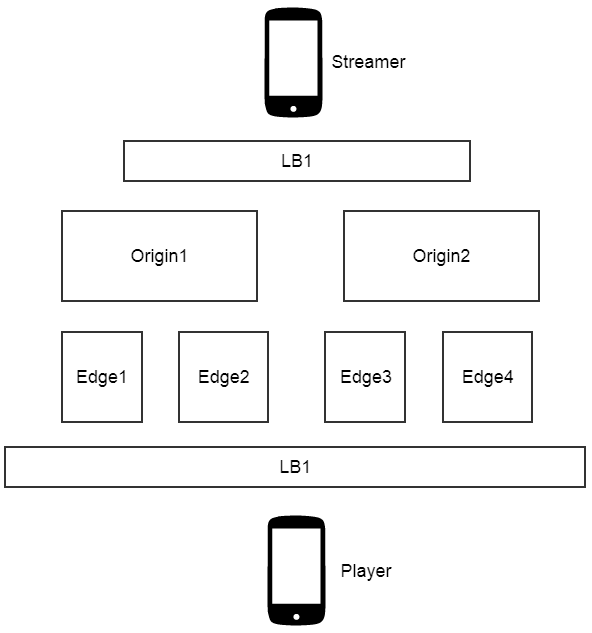

In this article we explain the process of building a geographically distributed min CDN and test the result. Our CDN will consist of four servers working as follows:

In this scheme we use the bare minimum of servers: 4.

1) Origin + LB

This server receives the video stream broadcasted from the web camera of the user.

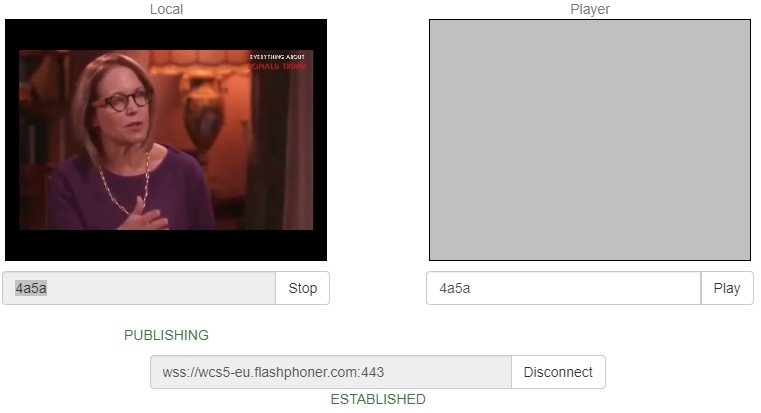

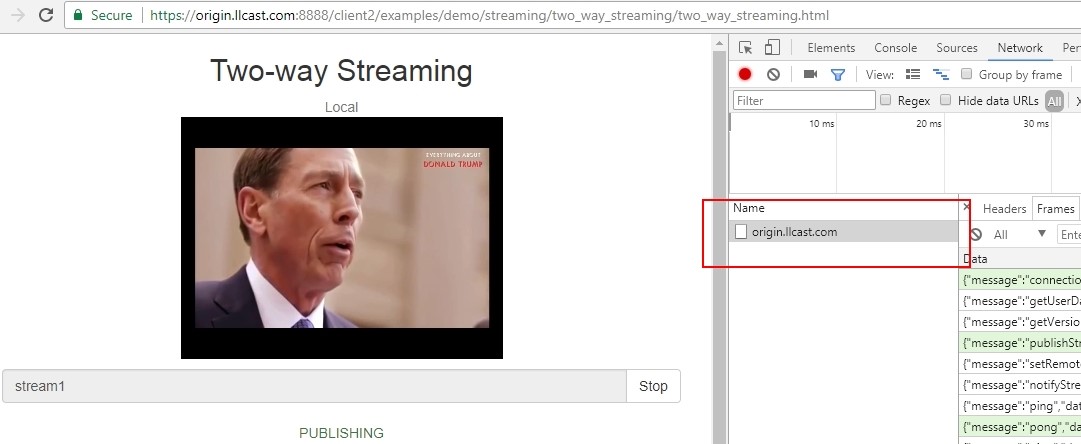

Stream transmission in a browser looks like this:

An example of sending a video stream from the web page opened in Google Chrome. The stream is sent to the test server, origin.llcast.com.

After the Origin accepted the video stream, it rebroadcasts it to two servers: Edge1 and Edge2. The Origin server itself does not broadcast streams.

For the sake of simplicity we put the load balancing function to the Origin server. The server queries the edge nodes and notifies clients of which node they should connect to.

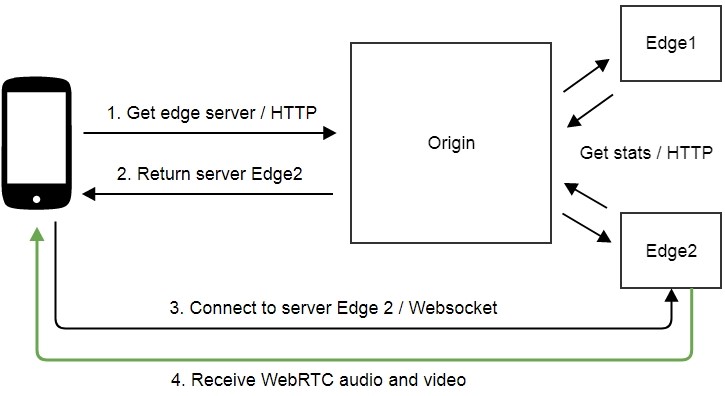

Here is how load balancing works:

1. A browser queries the load balancer (in our case it is the Origin).

2. The balancer returns one of two servers: Edge1 or Edge2.

3. The browser establishes a connection to the server (for instance, the Edge2) via Websocket.

4. The Edge2 server sends the video traffic using WebRTC.

The Origin periodically queries Edge1 and Edge2 to check their availability and collect the load data. This is enough for a decision which of the servers should accept the next connection query. If one of the servers is not available for some reason, all connection requests will land to the other Edge server.

2) Edge1 and Edge2

These are servers that actually perform broadcasting of audio and video traffic to spectators. Each of the servers receives a stream from the Origin server and provides the Origin server with information about its load.

3) TURN relay server.

Truly low latency requires the entire audio and video delivery system to work via UDP. However, this protocol may be closed in network firewalls, so sometimes the only way is to direct the traffic via HTTP port 443 that is usually opened. To do this we use the TURN relay. If UDP connection fails, the video is transmitted via TURN and TCP. Of course this affects latency, but if most of your spectators are behind corporate firewalls, you will still need to use TURN for WebRTC or find any other way to deliver content via TCP, such as Media Source Extension / Websocket.

In this case we dedicate one server specifically for delivery through firewalls. It will broadcast video via WebRTC / TCP for those spectators who are not lucky enough to have a normal UDP connection.

Geographical distribution

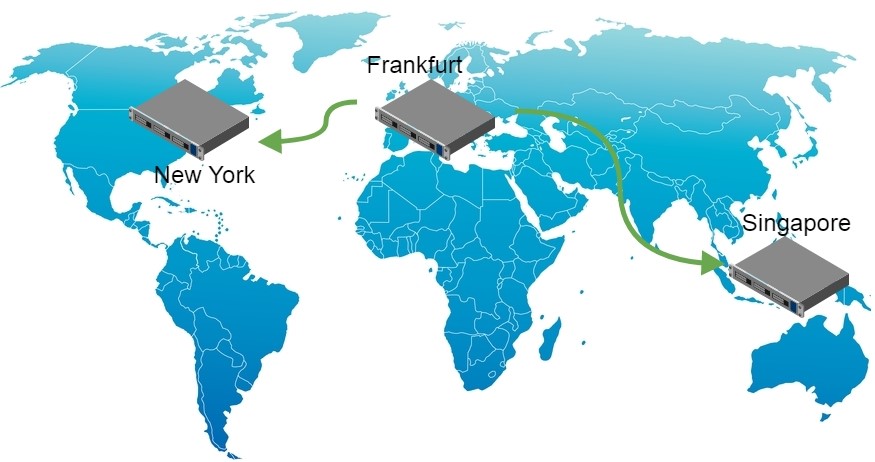

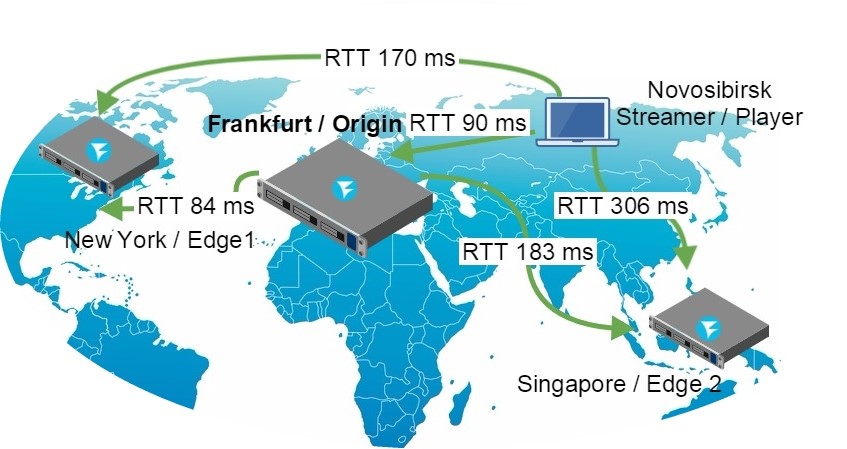

The Origin server is located in a datacenter in Frankfurt. The Edge1 server is located in New York, and Edge2 is in Singapore. Therefore, we end up with a widely distributed broadcasting network that covers the major part of the globe.

Installing and running the servers

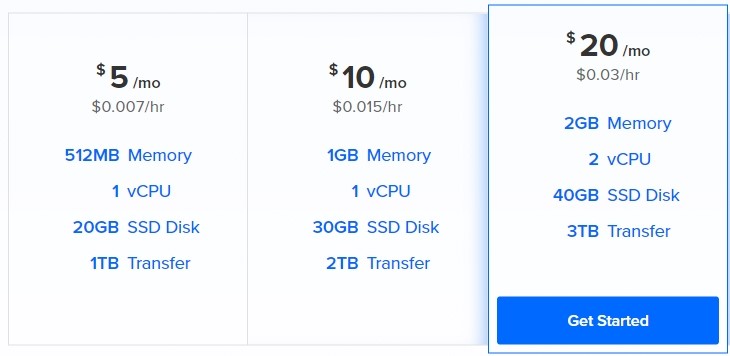

To perform cheap testing we purchased virtual servers at Digital Ocean.

We need 4 droplets (virtual servers) on DO, 2 GB of RAM each. This will cost us 12 cents an hour, or about 3 dollars per day.

As Origin and Edge1, Edge2 servers we will us a WebRTC server WCS5. It should downloaded and installed on every of droplets.

The install process of the WCS server looks as follows:

1) Download the package.

wget https://flashphoner.com/download-wcs5-server.tar.gz

2) Unpack and install.

tar -xvzf download-wcs5-server.tar.gz cd FlashphonerWebCallServer-5.0.2505 ./install.sh

Do not forget to install haveged that may be needed to speed up start of the WCS server on Digital Ocean.

yum install epel-release yum install haveged haveged chkonfig on haveged

3) Start.

service webcallserver start

4) Create Let’sencrypt certificates

wget https://dl.eff.org/certbot-auto chmod a+x certbot-auto certbot-auto certonly

Certbot puts the certificates to /etc/letsencrypt/live

Example:

/etc/letsencrypt/live/origin.llcast.com

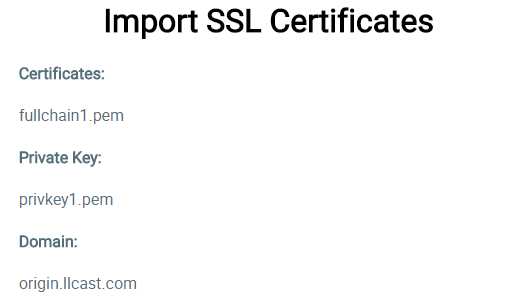

6) Import Let’sencrypt certificates

├── cert.pem

├── chain.pem

├── fullchain.pem

├── fullchain_privkey.pem

├── privkey.pem

└── README

We need two files from those: the chain of certificates and the private key:

- fullchain.pem

- privkey.pem

Upload them using Dashboard / Security / Certificates. To enter the admin panel of the WCS server, open https://origin.llcast.com:8888, where origin.llcast.com is the domain name of your WCS server.

The result of import looks as follows:

Done! Import is successful, and now we need to run a short test to make sure everything works as it should. Enter the Dashboard of the server in the demo, for example Two Way Streaming. Click the Publish button and after a few seconds click the Play button. Make sure the server works, accepts streams and sends them for playback.

After we test one server, do the same with the other two (Edge1 and Edge1). Install WCS5 on them, get Let’s Encrypt certificates and import the installed WCS server for correct operation. Finally, run a simple test to see if the server replies and plays the streams.

As a result, we have configured three servers available at:

Configuring the Origin server

Now we need to configure the servers and merge them into CDN. Let’s start with the Origin server.

As we wrote before, Origin also works as a balancer. In configuration files find WCS_HOME/conf/loadbalancing.xml and specify the Edge nodes there. The resulting config looks as follows:

edge1.llcast.com

443

edge2.llcast.com

443

The config describes the following settings:

- Which nodes the stream is rebroadcasted to by the Origin server (edge1 and edge2).

- What technology is used for rebroadcasting (webrtc).

- What port should be used for Websocket connection to the edge node (443).

- The balancing mode (roundrobin).

Currently two ways to rebroadcast from Origin to Edge are supported, set by the stream_distribution attribute, namely:

- webrtc

- rtmp

We set webrtc here because we aim to low latency. Then, we need to tell the Origin server that it is also a load balancer. Enables the balancer in the WCS_HOME/conf/server.properties config.

load_balancing_enabled =true

Besides, we set the balancer to the port 443, so that connecting clients could receive data from the balancer via the standard HTTPS protocol – once again a measure to pass-by firewalls. In the same config, server.properties, we add:

https.port=443

Then, we restart the WCS server to apply the changes made.

service webcallserver restart

So, we have turned on the balancer and configured it to work on the port 443 in the server.properties config, plus we configured Origin-Edge rebroadcasting in the loadbalancing.xml config.

Configuring the Edge servers

The Edge servers do not require any specific configuration. Both simply accept a stream from the Origin server as if a user sent this stream from his or her web camera directly to this Edge server.

And yet we need one setting. We need to switch the Websocket port of the streaming video servers to 443 for better firewall “penetration”. If the Edge servers accept connection on the port 443 via HTTPS, and TURN does the same – we receive a working solution to bypass firewalls that potentially extends visibility of broadcasting to users behind corporate firewalls.

Edit the WCS_HOME/conf/server.properties config and set this parameter:

wss.port=443

Restart the WCS server to apply the changes made.

service webcallserver restart

Now, Edge server configuration is finished, and we can now test the CDN.

Broadcasting the web camera to Origin

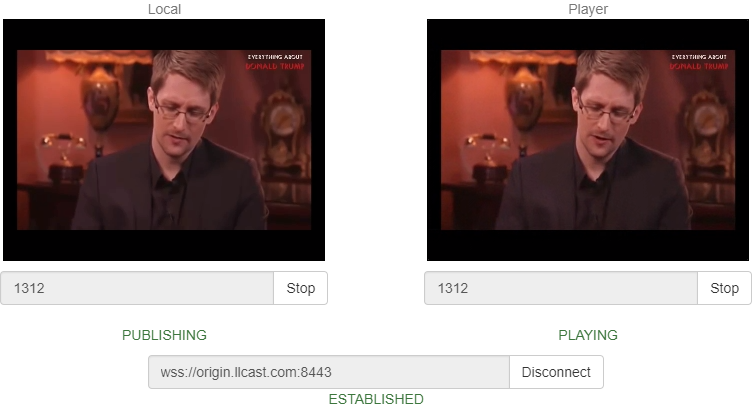

Users of our mini-CDN can be divided to those who stream and those who play. Streamers broadcast video to CDN, while spectators watch the video. The interface from the streamer’s side can look like this:

The simplest way to test this interface is to open the Two Way Streaming demo on the Origin server.

Here is an example of a streamer on the Origin server:

https://origin.llcast.com:8888/demo2/two-way-streaming

For the test, we need to install a Websocket connection to the server by clicking the Connect button and send a video stream to the server by clicking the Publish button. As a result, if everything is configured properly, the server is sent to Edge1 and Edge2 servers and can be played from there.

The presented interface is just a demo of an HTML page based on JavaScript client API (Web SDK). Surely you can customize it and make it look any way you want with HTML / CSS.

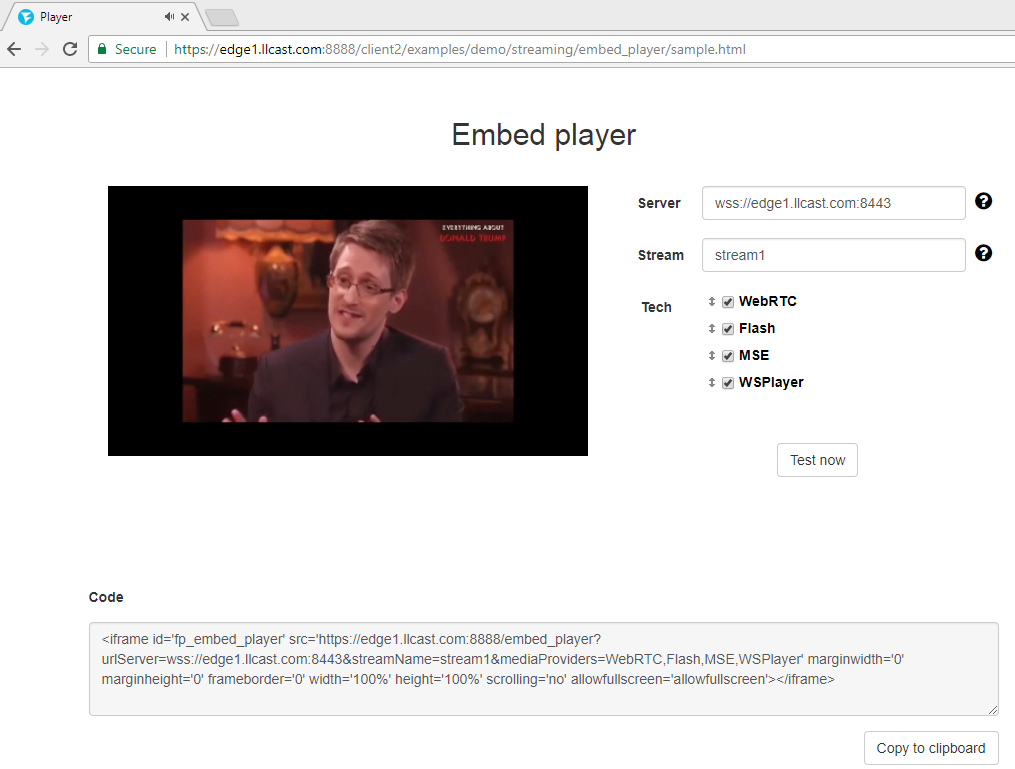

Playing a stream from Edge1 and Edge2 servers

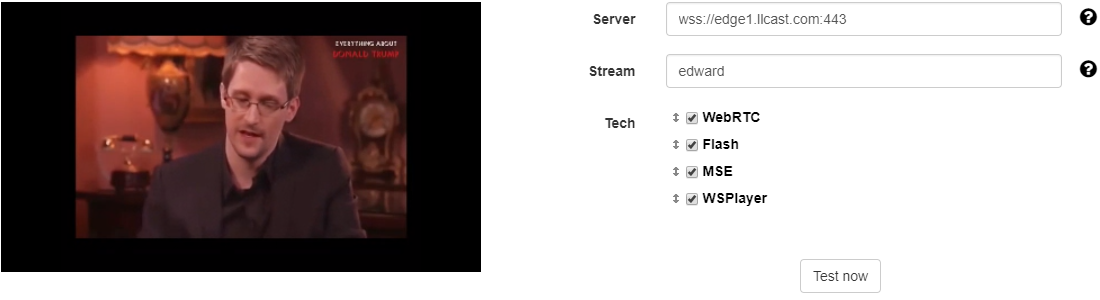

The interface of the player can be taken from one of the demo servers, edge1.llcast.com or edge2.llcast.com, for example Demo / Embed Player

To test, connect directly to Edge servers using the below link:

Edge1

https://edge1.llcast.com:8888/demo2/embed-player

Edge2

https://edge2.llcast.com:8888/demo2/embed-player

The player establishes a Websocket connection with the specified Edge server and fetches a video stream from that server via WebRTC. Note that on Edge servers a Websocket connection is established to the port 443 that we configured (see above) to bypass firewalls.

This player can be embedded to a website as an iframe, or you can integrate it more tightly using JavaScript API or developing your own design with HTML / CSS.

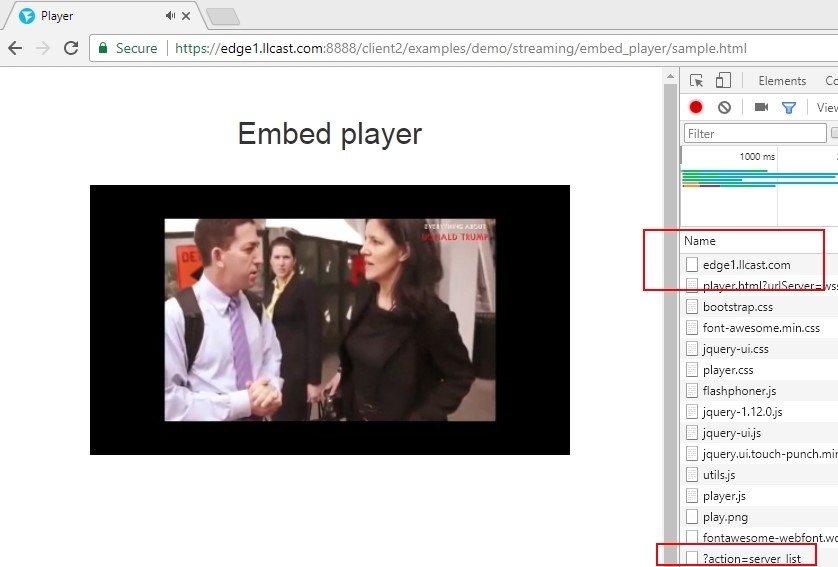

Activating the load balancer in the player

Above, we made sure that the Origin server receives a video stream and replicates it to two Edge servers. We connected to each of those Edge servers and tested playback of the stream.

Now, we need to use the balancer so that the player chooses the best Edge server from the pool automatically.

To do this, in the JavaScript code of the player, specify the address of the balancer – lbUrl. Once you do, the Embed Player example will query the load balancer first, obtain a recommended address of the server from it, and then establish connection to the received address via Websocket.

Testing the load balancer rule URL is easy. Open it in a browser:

https://origin.llcast.com/?action=server_list

As a result, you receive a chunk of JSON code where the balancer says the recommended Edge server.

{

"server": "edge1.llcast.com",

"flash": "1935",

"ws": "8080",

"wss": "443"

}

In this particular case, the balancer returned the first server edge1.llcast.com and said that a secure Websocket connection (wss) can be established to it on the port 443.

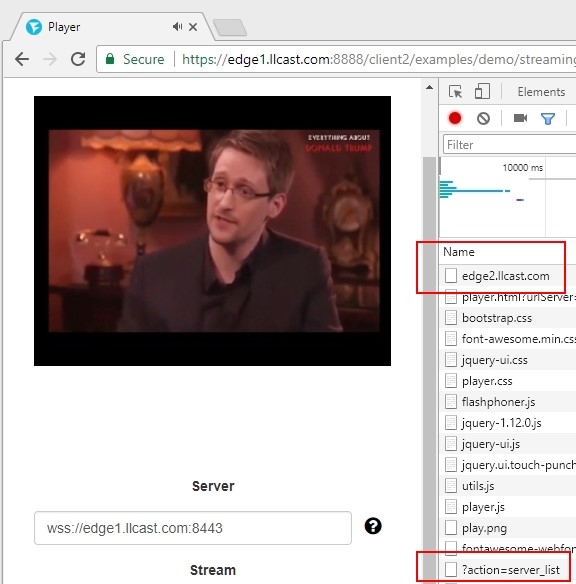

If you keep reloading the page in the browser, the balancer returns one or another Edge server over an over. That’s how roundrobin works.

Now, we only need to enable the balancer in the player. Add the lbUrl parameter to the code of the player in player.js when the connection session to the server is created:

Flashphoner.createSession({urlServer: urlServer, mediaOptions: mediaOptions, lbUrl:'https://origin.llcast.com/?action=server_list'})

The full source code of the player can be found here:

https://edge1.llcast.com:8888/client2/examples/demo/streaming/embed_player/player.js

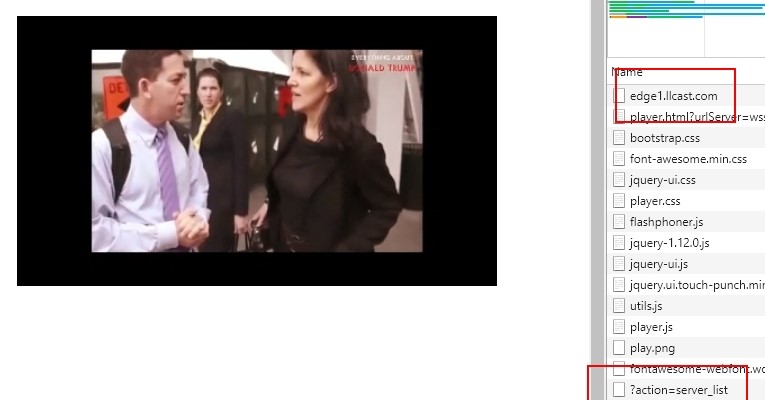

Now, make sure the balancer works and see if it correctly balances connections between two nodes edge1.llcast.com and edge2.llcast.com. To do this, simply refresh the page with the player and see the Network tab in Developer Tools, where we can see how balancer connects to the server edge1.llcast.com (red frame).

https://edge1.llcast.com:8888/client2/examples/demo/streaming/embed_player/player.html

Final test

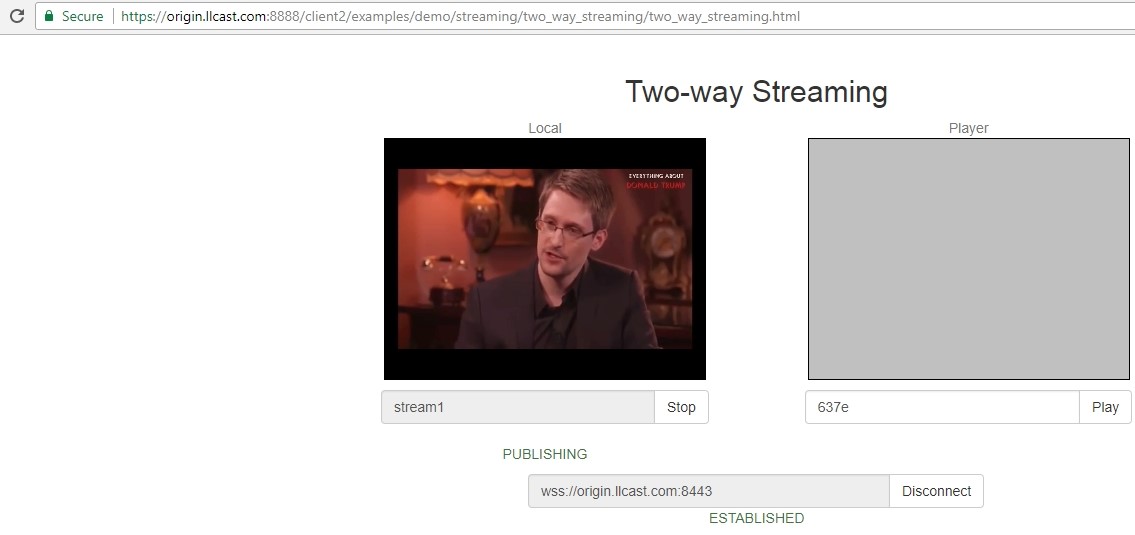

We have configured Origin-Edge, rebroadcasting, load balancing and made the player connect to the Edge server based on load balancer data. Now we need to assemble the whole thing together and made a test demonstrating how the resulting CDN works.

1. Open the page of the streamer

https://origin.llcast.com:8888/client2/examples/demo/streaming/two_way_streaming/two_way_streaming.html

Send a video stream from the web camera to the Origin server named stream1 from the Google Chrome browser.

Open Dev Tools – Network and make sure the broadcasting browser has connected to the Origin server and sent the stream to it.

2. Open the page of the player on the Edge server and play the stream1 stream.

https://edge1.llcast.com:8888/client2/examples/demo/streaming/embed_player/sample.html

We can see, the balancer offered edge1.llcast.com as the edge server, so the stream is being fetched from this server.

Then, we update the page one more time and see the balancer now connects the player to the edge2.llcast.com server located in Singapore.

Hence, we resolved the first part of the task and successfully created a geographically dispersed low latency CDN based on WebRTC.

Then, we need to measure the latency and make sure it is indeed low compared to RTT. Good latency in the world-wide network would be stable latency of no more than 1 second. Let’s see what we have got here.

Measuring latency

We suppose the value of latency may differ depending on what server we connected the player to – Edge1 in New York or Edge2 in Singapore.

To create some ground for measuring, let’s make RTT mapping to understand how our test network works, and what we can count on from each node. We suppose there are little or no lost packets, so we measure only the ping (RTT).

As you can see, our test network is indeed global and very uneven in terms of latency. The streamer and the player are located in Novosibirsk. Ping to the Edge1 server in New York is 170 ms, and to Edge2 in Singapore is 306 ms.

We will take these data into account when we will analyze the results of the test. For example, it is clear that if we broadcast a stream to the Origin in Frankfurt, then send it to Edge2 in Singapore and fetch it in Novosibirsk, the total estimated latency will hardly be less than (90 + 183 + 306) / 2 = 290 milliseconds.

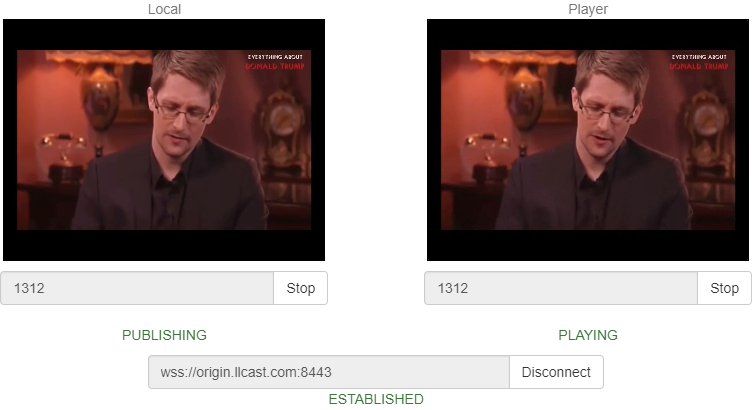

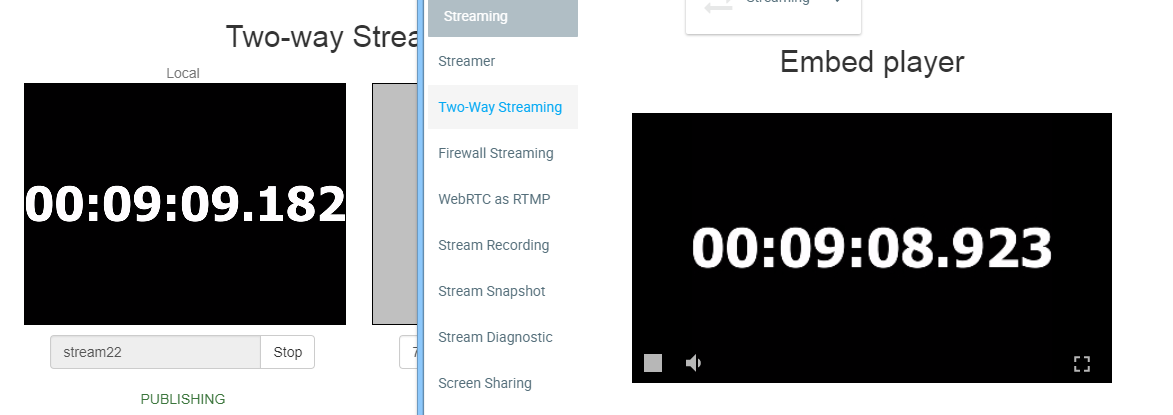

Now, let’s test this assumption in practice. To measure latency data, we use the millisecond timer test from here, using it as a virtual camera.

We send the stream to Origin and make a few screenshots when the stream plays on the Edge1 server. On the left is the local video from the zero-latency camera, and on the right is the video that came to the player via CDN.

Test results:

| Test number |

Latency (ms) |

|

1 |

386 |

|

2 |

325 |

|

3 |

384 |

|

4 |

387 |

|

Average latency: 370 ms |

|

As you can see, average latency of the video stream along the content delivery path from Novosibirsk (streamer) to Frankfurt (Origin) to New Your (Edge1) back to Novosibirsk (player) was 370 milliseconds. RTT along the same path gives 90 + 84 + 170 = 344 milliseconds. Therefore, the received result of 0.9 RTT is not bad at all taking into account that the ideal result would be 0.5 RTT.

Latency and RTT for the Edge1 (New York) route

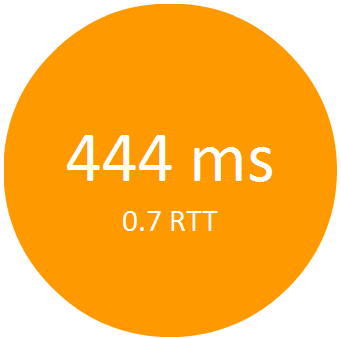

Now, let’s fetch the same stream from the Edge2 server in Singapore and see if increased RTT has any effect on latency. After running the same test with the Edge2 server, we receive the following results:

Test number Latency (ms)

| Test number |

Latency (ms) |

|

1 |

436 |

|

2 |

448 |

|

3 |

449 |

|

Average latency: 444 ms |

|

From this test we see that ration of latency to RTT is 0.7 now, which is better than in the previous test.

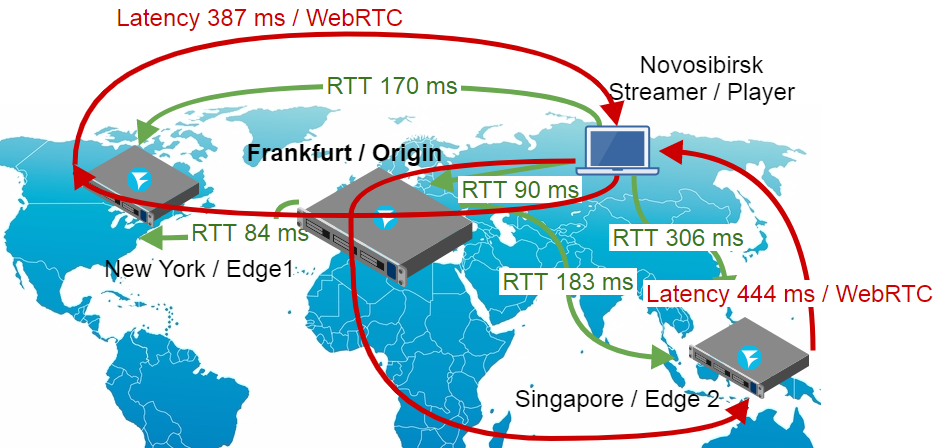

Latency and RTT for the Edge2 (Singapore) route

The results of the test suggest that as the value of RTT increases, the value of latency will gradually approach 0.5 RTT.

Anyway, we achieved the goal of creating a distributed CDN with latency less than a second, so we have a field for future tests and optimizations.

Conclusion

Summarizing all said above, we deployed three servers: Origin, Edge1 and Edge2, not four as promised in the beginning of this article. Setting, configuration, and testing of the TURN server behind a firewall would inflate this already bulky material even further. So we think this part will be covered in detail in a separate article. For now, simply keep in mind that the fourth server in this setting is a TURN server that services WebRTC browsers via the port 443 thus allowing to bypass firewall limitations.

So, a CDN for WebRTC streams is configured and the latency is measured. The final diagram of the entire system is the following:

So, how one can make this scheme even better? One way is to increase width. For instance, we can add several Origin servers and put an additional balancer between them. This way, we will balance not only spectators, but also streamers who broadcast content from web cameras:

The CDN is open for demo access on llcast.com. In the future we will possibly shut it down, so demo links will stop working. But for now, if you want to measure latency yourself, please welcome. Good streaming!

Links

https://origin.llcast.com:8888 – the Origin demo server

https://edge1.llcast.com:8888 – the Edge1 demo server

https://edge2.llcast.com:8888 – the Edge2 demo server

Two Way Streaming – the demo interface of broadcasting a web camera stream to Origin.

Embed Player – the demo interface of the player with a load balancer between Edge1 and Edge2

WCS5 – the WebRTC server the CDN is built on